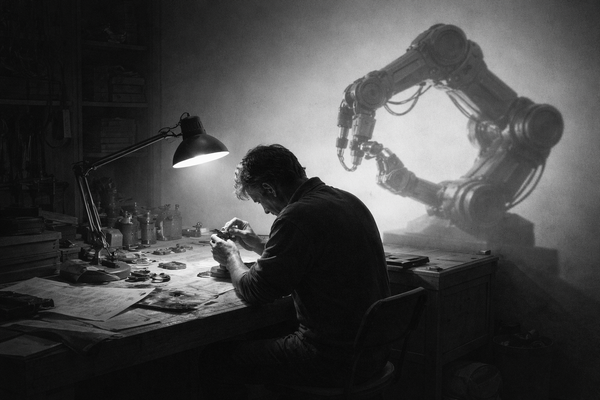

Find the five gaps before spending on AI

Companies see charts, diagnose behavioral gaps, buy a stack of AI tools, launch training programs, and wait for productivity gains. Later, the executive teams wonder why the demo looked so good.

Anthropic released a report last week that introduces a new way to measure AI's impact on the labor market. They call it “observed exposure”: compare what AI could theoretically do with what people are actually using it for, measured from real Claude usage data in professional settings.

The chart everyone is sharing tells a striking story. In Computer & Math occupations, AI could theoretically speed up 94% of tasks. In practice, it covers 33%. Legal: 80% theoretical, barely 20% actual. Business and Finance, Office and Admin, Management: the same pattern everywhere. A massive blue area of theoretical capability with a small red splatter of actual usage sitting inside it.

Anthropic reads this as headroom. The red will grow to fill the blue as adoption deepens, they write. The AI industry reads it the same way. The implication is clear: people are underusing the tools. Buy more seats. Train your teams. Adopt faster.

That reading is convenient for everyone selling AI. But for most others, it is wrong.

The gap between the blue and the red is being treated as one gap. An adoption gap. A behavioral gap that closes when people start using the tools they already have. But when you look at why the gap persists, across industries and occupational categories, you find at least five distinct gaps stacked on top of each other. They require completely different responses. And misdiagnosing which one you're facing is the most expensive mistake in enterprise AI right now.

A diagnostic, not a countdown

The behavioral gap. People haven't adopted tools that already work. The tool exists, performs well enough, and sits unused because of inertia, unfamiliarity, or a lack of management direction. This gap is real. It closes with training, habit change, and leadership that models usage. A change management effort will get you further than a technology partner here. But it is also the only one of the five gaps that the AI industry has an incentive to talk about, which is why it dominates the conversation.

The architectural gap. Systems don't talk to each other. Data isn't collected, or sits fragmented across silos that were never designed to connect. A recent Purdue study on agribusiness found that agricultural data is scattered across satellites, sensors, weather feeds, machinery, and agronomic logs, with no way to unify it. A Manufacturers Alliance study found 47% of manufacturers view data fragmentation as their primary obstacle. Better models won't close this gap. Engineering investment will.

The trust gap. The cost of AI error is asymmetric. A hallucinated email draft wastes two minutes. A hallucinated drug interaction recommendation, a miscalculated crop treatment, or a wrong financial compliance judgment can end a business. Even in finance, one of the most AI-exposed sectors, certain tasks remain manual because the downside of a mistake is existential. This gap closes only with validation infrastructure: guardrails, human-in-the-loop design, domain-specific evaluation. Investing in reliability before scale is the only way through.

The inflated-theory gap. The theoretical capability score assumes that making a task 2x faster equals readiness for deployment. A task done 2x faster at 80% reliability is often worse than a human doing it at 1x with 99% accuracy. Anthropic's own earlier Economic Index revised its productivity estimates downward by roughly half after accounting for error rates on complex tasks. The blue area is generous. Some of it will never turn red because the theory overpromises what practice can deliver. If your vendor is selling you the blue, ask to see the red.

The economic gap. The task can be automated, the tool works, but the ROI doesn't justify the cost at current prices. This gap is closing the fastest. NVIDIA's GTC conference next week is expected to showcase chips that push inference costs down by an order of magnitude. Use cases that were marginal a year ago are becoming compelling. Patience is a legitimate strategy here, but probably not for long.

Who benefits from the wrong diagnosis

Here's where the incentive structure matters. The AI industry's entire go-to-market motion depends on framing the blue-to-red distance as a behavioral problem. Behavioral problems are solved by purchasing software. Every SaaS vendor, every model provider, every platform company wants you to believe that the gap is about adoption. Your people just need to use the tools. Here's a dashboard. Here's a copilot.

For the knowledge worker sitting in a tech company, that might be true. For most others operating in asset-heavy environments, or domains where the cost of error is high, the dominant gaps are architectural and trust-based. Those are solved by building, integrating, and validating. They are slow and expensive and they don't come with a freemium tier. Nobody at a conference is eager to sell you data infrastructure work. But that is where the actual constraint lives.

The Anthropic report deserves credit. It is methodologically honest, and its finding that there is no systematic increase in unemployment in AI-exposed occupations should temper the breathless predictions. But the chart is being read as a countdown timer, as if the red will inevitably and uniformly expand to fill the blue. The ILO put it well in a paper published the same week: exposure indicators reveal technological susceptibility, not labour market outcomes. They tell you what AI could do. They don't tell you whether it's profitable, trustworthy, or architecturally possible in your context.

The most expensive pattern in enterprise AI right now looks like this: a company sees the chart, diagnoses a behavioral gap, buys a stack of AI tools, launches a training program, and waits for productivity gains. Six months later, they have a pile of seat licenses, dashboards that get opened once a month, and an executive team wondering why the demo looked so good. The gap was never behavioral. It was architectural. Or it was trust. Or the theoretical capability was overstated. The tools couldn't help because the problem was upstream of the tools.

Diagnose your gap before you buy anything. The blue is aspirational. The red is real. And the distance between them is yours to close, on your terms, once you understand what's actually in the way.

Enjoy your Sunday!

When Bharath Moro, Head of Products at Moative, explains guardrails using a salt shaker and tablecloth, guests usually take notes on their napkins.

Top reads